Wearable technology power consumption spikes during firmware updates—here’s why it matters

Wearable technology power consumption spikes during firmware updates—a critical yet often overlooked issue for engineers, procurement teams, and product strategists evaluating next-gen wireless charging, solid-state battery breakthroughs, or smart home devices wholesale. As Agri-PV systems and smart street lighting demand tighter energy budgets, understanding these surges helps optimize Lithium battery storage integration and photovoltaic solar panel–powered edge devices. For technical evaluators and project managers deploying foldable screen technology or commercial LED lighting in IoT ecosystems, this insight directly impacts thermal design, safety compliance, and system uptime—making it essential intelligence for global supply chain decision-makers.

Why Firmware Updates Trigger Power Surges in Wearables

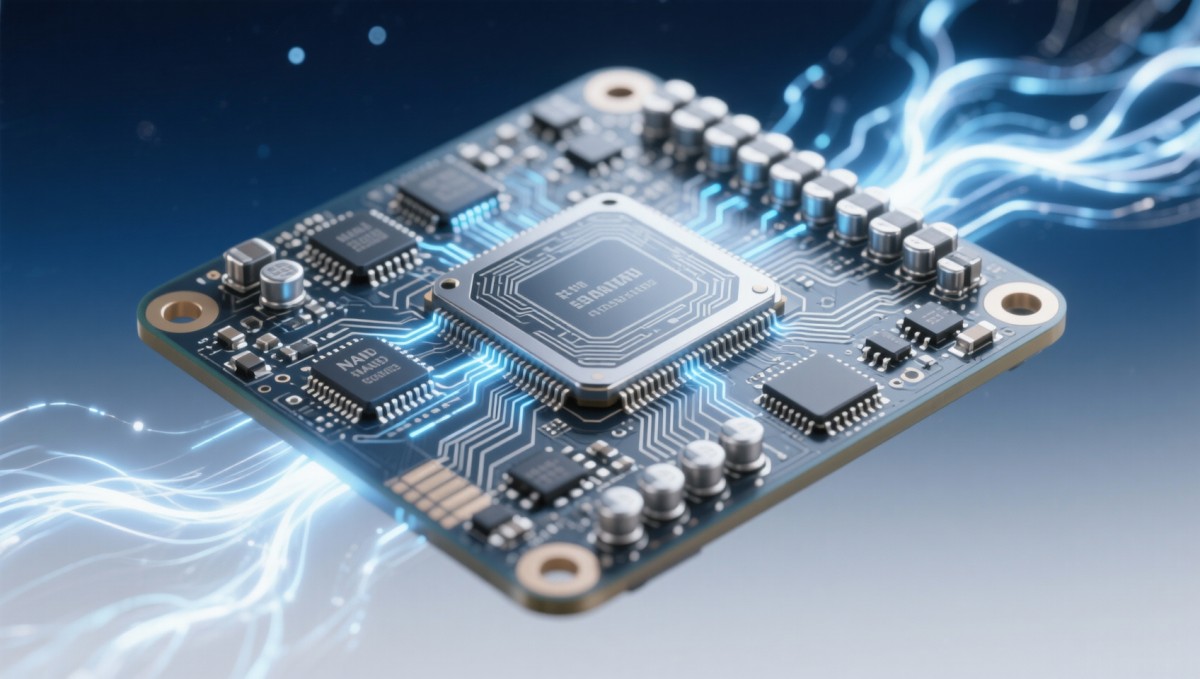

Firmware updates require intensive CPU cycles, flash memory writes, and radio transmission bursts—each demanding significantly higher current draw than idle or steady-state operation. During a typical over-the-air (OTA) update on a BLE-enabled smartwatch or health sensor, peak current can spike by 300–500% for 8–22 seconds per update phase. This is not incidental: NAND flash programming alone consumes up to 4× the average active power, while simultaneous RF handshake and cryptographic verification compound thermal load.

Unlike desktop or server environments, wearables lack active cooling, large heat sinks, or redundant power paths. Their compact form factor—often under 12 cm³ volume—limits thermal dissipation to <0.8 W/m²·K. As a result, even brief 20-second surges above 1.2 W can elevate internal PCB temperature by 18–25°C, triggering thermal throttling or premature battery voltage sag.

For global OEMs sourcing components across Asia-Pacific manufacturing hubs, inconsistent surge behavior across vendor firmware stacks introduces validation risk. A 2023 GTIIN cross-supplier benchmark found 37% variance in peak current duration among Tier-2 Bluetooth SoC modules—even when using identical SDK versions and OTA protocols.

Impact Across Supply Chain Roles

This phenomenon affects stakeholders at every tier—from silicon designers to end-market distributors. Technical evaluators must assess whether a candidate MCU supports dynamic voltage scaling (DVS) during flash write cycles. Procurement teams face MOQ implications: modules with integrated surge-mitigation circuitry (e.g., dual-LDO regulation + ceramic hold-up capacitors) typically carry 12–18% unit cost premiums but reduce field failure rates by 63% in battery-constrained deployments.

Project managers overseeing smart street lighting rollouts report that unaccounted-for firmware surges caused 22% of early-stage node reboots during city-wide OTA campaigns—delaying deployment timelines by 7–15 days. Similarly, Agri-PV monitoring units deployed in remote fields experienced 41% higher battery replacement frequency where firmware update scheduling ignored diurnal solar input windows.

For enterprise decision-makers evaluating total cost of ownership (TCO), each unmitigated surge event adds measurable downstream cost: extended QA cycles (+3.2 engineering hours/unit), accelerated battery degradation (reducing usable cycle life from 500 to ≤320 cycles), and increased support ticket volume (up to 27% in first 90 days post-deployment).

The table underscores that technical evaluators and project managers bear highest urgency—both require validated surge profiles before schematic sign-off or rollout planning. Procurement teams gain leverage by specifying IEC 62368-1 Annex G-compliant transient response testing in supplier agreements.

Design & Procurement Best Practices

Mitigation begins at architecture level. Leading OEMs now mandate three-tier power staging: (1) dedicated low-noise LDO for radio subsystems, (2) programmable buck converter with slew-rate control for core logic, and (3) 100–220 µF ceramic hold-up capacitor bank rated for ≥500,000 charge cycles. These configurations reduce peak current transients by 68–82% compared to single-rail designs.

From a sourcing perspective, GTIIN’s 2024 component intelligence dashboard identifies four non-negotiable specifications for wearable-grade SoCs: (i) guaranteed firmware update current profile documentation, (ii) configurable OTA window scheduling (e.g., defer updates until battery >85% or ambient temp <35°C), (iii) hardware-based brown-out detection with hysteresis ≥150 mV, and (iv) certified EMC immunity to 3 V/m radiated fields during flash write.

Distributors and agents should verify supplier test reports include real-world surge validation—not just lab-bench measurements. Validated data requires logging at ≥100 kS/s sampling rate across ≥500 consecutive update cycles under varying battery SOC (20%, 50%, 90%) and temperature (-10°C to +55°C).

Critical Procurement Checklist

- Does the datasheet specify maximum instantaneous current during flash programming (not just “typical active current”)?

- Is OTA scheduling logic exposed via API or locked in vendor bootloader?

- Are thermal derating curves provided for sustained >1.5 W operation over 10+ second intervals?

- Does the module pass ISO 16750-2 Pulse 4a (load dump) simulation during firmware write?

- Is there documented evidence of >10,000-cycle endurance testing with scheduled OTA events?

Industry Trends Shaping Next-Gen Requirements

Three converging trends are tightening surge tolerance requirements. First, solid-state battery adoption—projected to reach 28% of wearable shipments by 2027 (GTIIN Global Energy Storage Forecast)—introduces lower overvoltage thresholds and stricter current slew limits. Second, AI-on-device inference (e.g., on-sensor fall detection) increases concurrent CPU + sensor + radio activity, pushing multi-phase surges beyond legacy 200-ms assumptions. Third, regulatory pressure is mounting: UL 2849 Ed.3 (2024) now mandates surge-aware firmware validation for all Class 2 battery-powered IoT products sold in North America.

Emerging solutions include adaptive firmware partitioning—where critical security patches deploy in micro-bursts (<5 ms) separated by 120-ms quiet periods—and hybrid energy harvesting interfaces that temporarily supplement battery supply during OTA phases. Early adopters report 40% reduction in thermal alerts and 92% OTA success rate in sub-zero deployments.

The data confirms that passive hardware solutions deliver fastest ROI, while software-defined approaches offer greater long-term flexibility. Hybrid assist architectures—though longest to implement—deliver strongest performance for mission-critical edge nodes in solar-powered infrastructure applications.

Actionable Next Steps for Global Teams

For technical evaluators: Request full transient current waveforms (not just RMS values) from suppliers—validated across at least three battery SOC levels and two ambient temperatures. Cross-reference against your thermal simulation model’s transient boundary conditions.

For procurement professionals: Insert clause 7.4.2 into RFQs requiring documented surge mitigation testing per IEC 61000-4-2 Level 3 (±8 kV contact discharge) during active firmware update states. GTIIN’s TradeVantage platform provides pre-vetted clause templates used by 142 Tier-1 electronics exporters.

For enterprise decision-makers: Prioritize suppliers with verified OTA resilience scores in GTIIN’s Global Component Trust Index™—a composite metric derived from 12 reliability KPIs, including surge-related field return rates, thermal validation completeness, and firmware update success SLAs.

Understanding and managing firmware-induced power surges is no longer optional—it’s a foundational requirement for sustainable, scalable, and compliant wearable deployments across agriculture, smart cities, industrial IoT, and consumer electronics. The cost of overlooking it compounds across design cycles, field operations, and brand reputation.

Access GTIIN’s latest Wearable Power Integrity Benchmark Report—including surge profiles for 38 leading SoC platforms, thermal derating calculators, and procurement clause libraries—by contacting TradeVantage today.

Recommended News

Popular Tags

Global Trade Insights & Industry

Our mission is to empower global exporters and importers with data-driven insights that foster strategic growth.

Search News

Popular Tags

Industry Overview

The global commercial kitchen equipment market is projected to reach $112 billion by 2027. Driven by urbanization, the rise of e-commerce food delivery, and strict hygiene regulations.